Welcome to akid’s documentation!¶

Fork me at https://github.com/shawnLeeZX/akid !

akid is a python package written for doing research in Neural Network. It

also aims to be production ready by taking care of concurrency and

communication in distributed computing. It is built on

Tensorflow. If combining with

GlusterFS, Docker and

Kubernetes, it is able to provide dynamic and elastic

scheduling, auto fault recovery and scalability.

It aims to enable fast prototyping and production ready at the same time. More specifically, it

- supports fast prototyping

- built-in data pipeline framework that standardizes data preparation and data augmentation.

- arbitrary connectivity schemes (including multi-input and multi-output training), and easy retrieval of parameters and data in the network

- meta-syntax to generate neural network structure before training

- support for visualization of computation graph, weight filters, feature maps, and training dynamics statistics.

- be production ready

- built-in support for distributed computing

- compatibility to orchestrate with distributed file systems, docker containers, and distributed operating systems such as Kubernetes. (This feature mainly is a best-practice guide for K8s etc, which is under experimenting and not available yet.)

The name comes from the Kid saved by Neo in Matrix, and the metaphor to build a learning agent, which we call kid in human culture.

It distinguish itself from an unique design, which is described in the following.

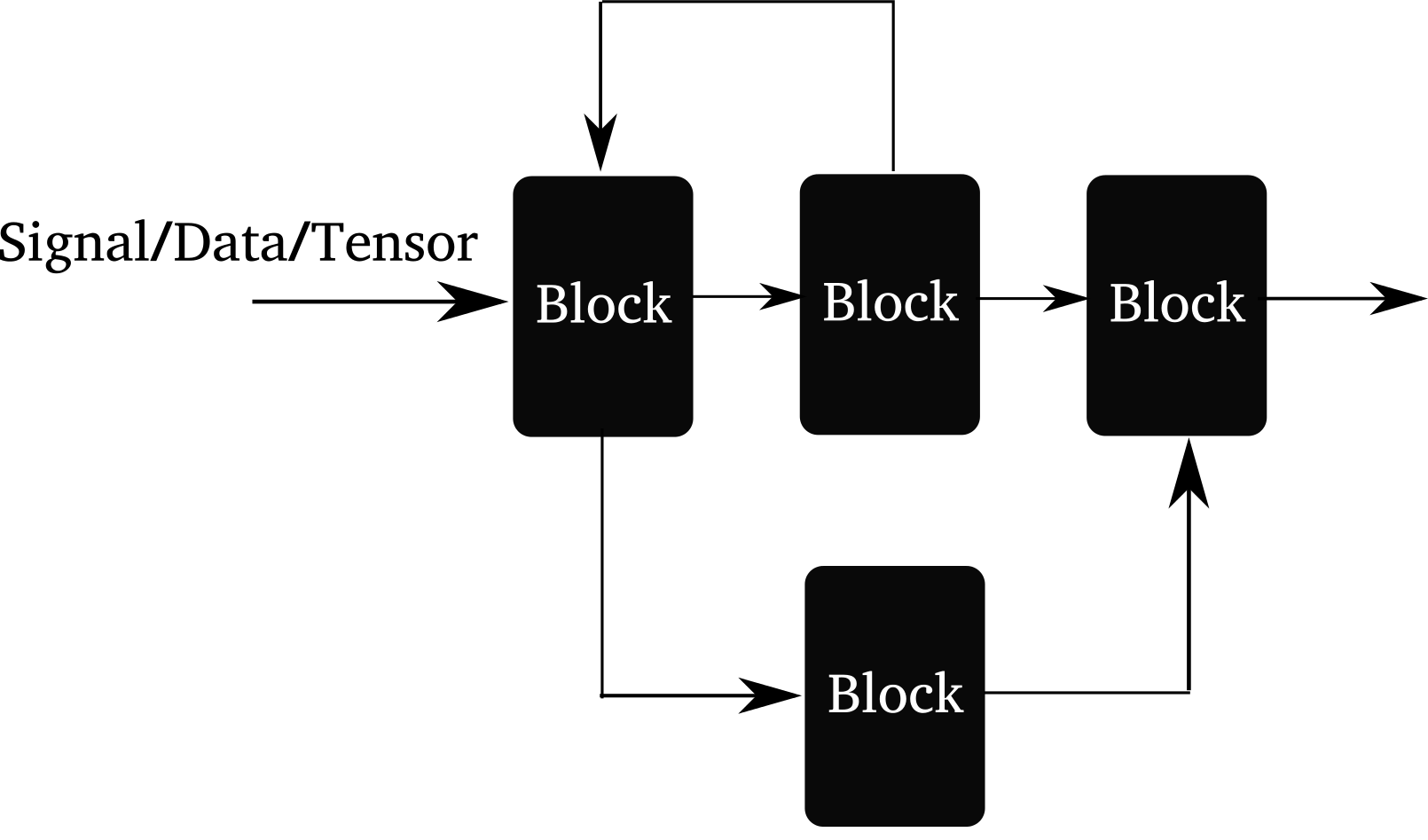

Illustration of the arbitrary connectivity supported by akid. Forward connection, branching and mergine, and feedback connection are supported.

akid builds another layer of abstraction on top of Tensor: Block. Tensor can be taken as the media/formalism signal propagates in digital world, while Block is the data processing entity that processes inputs and emits outputs.

It coincides with a branch of “ideology” called dataism that takes everything in this world is a data processing entity. An interesting one that may come from A Brief History of Tomorrow by Yuval Noah Harari.

Best designs mimic nature. akid tries to reproduce how signals in nature propagates. Information flow can be abstracted as data propagating through inter-connected blocks, each of which processes inputs and emits outputs. For example, a vision classification system is a block that takes image inputs and gives classification results. Everything is a Block in akid.

A block could be as simple as a convonlutional neural network layer that merely does convolution on the input data and outputs the results; it also be as complex as an acyclic graph that inter-connects blocks to build a neural network, or sequentially linked block system that does data augmentation.

Compared with pure symbol computation approach, like the one in tensorflow, a block is able to contain states associated with this processing unit. Signals are passed between blocks in form of tensors or list of tensors. Many heavy lifting has been done in the block (Block and its sub-classes), e.g. pre-condition setup, name scope maintenance, copy functionality for validation and copy functionality for distributed replicas, setting up and gathering visualization summaries, centralization of variable allocation, attaching debugging ops now and then etc.